VoIP troubleshooting is frustrating because the same symptom can come from completely different places. That’s why this guide is a practical field workflow you can reuse: how to isolate whether the issue is the device, LAN/Wi-Fi, router/firewall/NAT, ISP path, PBX, or provider; which tools to use; and how to build real troubleshooting skills over time so you stop guessing and start fixing issues methodically.

What does VoIP troubleshooting involve?

VoIP troubleshooting is the process of isolating where the failure lives before you try to “fix” anything. You narrow the problem down layer by layer, because call quality, ringing, registration, and audio issues usually originate in different parts of the stack.

Endpoint/headset/softphone vs desk phone — First, confirm whether it’s a single-user/device issue (bad headset, Bluetooth problems, app permissions, outdated firmware) or something affecting all phones. If only one device is impacted, you can often fix it locally without touching the network.

LAN vs Wi-Fi — Test the same call on Ethernet vs Wi-Fi. Wi-Fi introduces roaming, interference, and congestion that commonly causes jitter and packet loss. If the issue disappears on a wired connection, you’ve instantly narrowed the root cause.

Router/firewall/SIP ALG/NAT — This layer breaks VoIP in sneaky ways: SIP ALG rewriting packets, NAT timeouts causing calls to drop, or blocked RTP ports causing one-way/no audio. If issues are consistent across multiple users at one site, this is often the culprit.

ISP/WAN path — Even with a perfect internal network, the path to your provider can introduce latency, jitter, or packet loss—especially during peak hours. This is where you validate whether the issue is “inside the building” or “on the way out.”

PBX vs provider — Determine whether the problem is inside your PBX/UC platform (routing rules, registration state, codec/DTMF settings) or on the provider side (trunk configuration, inbound routing, upstream carrier issues). This prevents wasting time changing local settings when the failure is upstream.

Signaling (SIP) vs media (RTP) — SIP controls call setup (ringing/answer/teardown); RTP carries the audio. A call that rings and connects but has one-way audio is usually an RTP/NAT/firewall issue, not “SIP is down.” Separating signaling vs media is one of the biggest “level-up” skills in VoIP troubleshooting.

FAQ About VoIP Troubleshooting

- What are the most common VoIP issues?

Here are five common ones you’ll see repeatedly in real deployments:

Choppy/robotic audio (often jitter/packet loss or Wi-Fi congestion)

Dropped calls after 30–60 seconds (often NAT/firewall timers or SIP ALG interference)

Echo/background noise or low volume (often headset/acoustics or device gain settings)

One-way audio / no audio (often RTP blocked, NAT mismatch, wrong IP in SDP)

Phones not ringing / missed inbound calls (often routing/registration issues or mobile sleep/background restrictions)

- What is the main disadvantage of VoIP?

The biggest disadvantage of VoIP is its reliance on a stable internet connection. Without enough bandwidth or if the network experiences jitter, latency, or packet loss, call quality can suffer. Additionally, VoIP systems can be sensitive to power outages or poorly configured routers

What are the symptoms of VoIP issues?

The fastest way for VoIP troubleshooting is to classify the symptom before you guess the cause. Write it down in one sentence, define what “good” would look like, and note when it happens. A simple template:

Symptom (one sentence): What exactly is happening?

“Good” baseline: What should happen instead?

When/where: Only on Wi-Fi or also wired? Only inbound or also outbound? Only one user or everyone? Only at certain times?

Here are the symptom categories we’ll use throughout this guide:

| Symptom | What “good” looks like | When it usually shows up | Likely layer to check first |

|---|---|---|---|

| Choppy/robotic audio | Smooth, consistent audio with no dropouts | Peak network usage, Wi-Fi, busy office hours | Wi-Fi/LAN congestion, packet loss/jitter, QoS |

| Delayed audio (lag) | Natural back-and-forth conversation | Cross-region calls, overloaded WAN, VPN paths | WAN latency, routing path, bandwidth contention |

| Dropped calls | Calls stay connected until one party hangs up | After a fixed time (30s/5–15 min) or randomly | NAT/firewall timers, SIP ALG, session refresh |

| One-way audio / no audio | Both sides hear each other clearly | Often after answer; sometimes only inbound/outbound | RTP/media path, firewall ports, NAT/SDP mismatch |

| Calls won’t ring / inbound doesn’t reach users | Inbound routes to the correct user/queue | Inbound only; outbound may still work | PBX routing/DID mapping, registration, provider routing |

| Phones deregister / registration flaps | Devices remain registered and reachable | Overnight, after network changes, intermittent | Network stability/DNS, NAT keepalive, credentials |

| DTMF/IVR keypad fails | “Press 1/2/3” works reliably every time | IVRs, voicemail PINs, conference bridges | DTMF method mismatch (RFC2833/4733 vs INFO vs inband), codec/transcoding |

| “VoIP not working” (general outage) | Registration and inbound/outbound work normally | Multiple users/systems impacted at once | ISP/WAN outage, PBX/provider incident, firewall change |

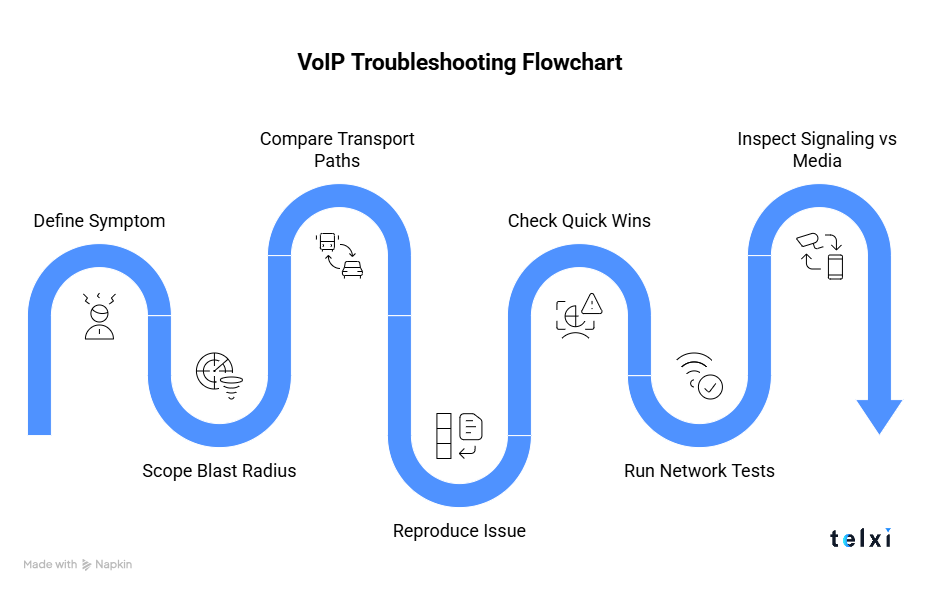

VoIP Troubleshooting Flowchart

A VoIP troubleshooting flowchart is a step-by-step decision path that helps you diagnose call issues in the right order. It walks you through a sequence so you can quickly narrow the root cause and apply the correct fix with evidence, not assumptions.

Step 1: Define the exact symptom

Write the symptom in one sentence and make it testable. Include:

What happened: choppy audio, one-way audio, calls dropping, phones unregistered, etc.

What “good” looks like: clear two-way audio, no drops, inbound rings correctly, etc.

When it happens: specific timestamps, time-of-day patterns, only after answer, only inbound, only over Wi-Fi, etc.

Step 2: Scope the blast radius

This step tells you whether the problem is local (one endpoint) or systemic (network/provider/PBX):

One user vs multiple users vs everyone

If it’s one user, suspect endpoint/headset/app settings first. If it’s everyone at a site, suspect LAN/Wi-Fi/router/ISP.One device type vs all device types

Only softphones affected? Could be PC audio drivers, VPN, or app config. Only desk phones? Could be VLAN/QoS/DHCP/firmware. Both? More likely network/provider.One location vs multiple locations

One office only usually points to that site’s network edge (router/firewall/ISP). Multiple sites at once can indicate PBX/provider issues or a shared WAN change.Inbound only vs outbound only vs both

Inbound-only issues often point to routing/DID mapping/provider inbound. Outbound-only can point to trunk auth/routing/policy. Both suggests broader connectivity or PBX/provider trouble.

Step 3: Compare transport paths

Before you dig into SIP traces or change PBX settings, do one simple isolation test: does the problem happen on every connection type, or only one? This is the quickest way to separate “the phone system is broken” from “the network path is unstable.”

If the issue disappears when you move from Wi-Fi to Ethernet, you’ve likely found a Wi-Fi contention/interference problem (or roaming behavior). If it disappears when you move from the office network to a mobile hotspot, the issue is likely in the office router/firewall/ISP path. If it follows the user across every network, it’s more likely endpoint/app configuration, account/registration issues, or PBX/provider behavior.

Step 4: Reproduce the issue

Troubleshooting gets exponentially easier when you can point to repeatable evidence instead of “it happens sometimes.” Your goal is to either reproduce the problem on demand or collect a small set of “known bad” calls that share the same pattern.

Try to capture at least two failed calls and one normal call, with exact timestamps and notes on the environment (device, network type, inbound/outbound). This lets you compare logs and traces side-by-side and prevents the classic trap of chasing a ghost that only appears once a day.

Step 5: Check quick wins

Now you can do the fast checks that fix a surprising number of VoIP incidents—but only after you’ve captured timestamps/examples. The goal here is to eliminate common misconfigurations (especially at the network edge) without turning the issue into a moving target.

Start by checking recent changes (router/firewall updates, ISP changes, VPN rollout, Wi-Fi changes). If your environment uses SIP trunks, verify SIP ALG is disabled on the router/firewall and confirm there aren’t new firewall rules blocking SIP/RTP. If only one user is affected, swap the headset, test another device, and confirm the softphone/OS isn’t suppressing audio or background calling.

Step 6: Run basic network tests

At this point you’re validating whether the network can carry real-time voice reliably. You’re looking for symptoms like latency spikes, packet loss, jitter, or congestion during busy periods—because VoIP doesn’t need huge bandwidth, but it needs stable delivery.

Run simple tests from the affected site/user: ping/latency to the PBX/provider edge, an mtr/traceroute to spot unstable hops, and a bandwidth sanity check during peak hours. If the issue happens only at certain times of day, test during those windows—many “VoIP is random” complaints are actually congestion patterns.

Step 7: Inspect signaling vs media (SIP vs RTP)

This is the “level up” step: separate whether the call is failing during setup (SIP) or after it connects (RTP/media). If the phone rings, the call answers, but audio is missing or one-way, that’s usually a media path issue—not a SIP registration problem.

Use your PBX call logs/trace (or a ladder diagram if available) to see whether calls are establishing cleanly (INVITE → 200 OK → ACK). Then confirm RTP is flowing both directions. One-way audio is often caused by NAT/firewall behavior, wrong IPs in SDP, blocked RTP ports, or double NAT.

How to Fix Common VoIP Issues

Most VoIP troubleshooting comes from: stabilizing the network (loss/jitter/latency), eliminating edge-device problems (SIP ALG, NAT timers, port handling), and verifying signaling vs media so you’re fixing the right thing.

Troubleshooting VoIP call quality issues (jitter, loss, latency)

Start by figuring out whether the problem lives on Wi-Fi, the LAN, the WAN path, or the endpoint. The simplest isolation test is to move one affected user from Wi-Fi to Ethernet (or from office network to mobile hotspot) and see if the symptom changes. If quality improves immediately, you’ve narrowed the cause without touching the PBX or provider.

Once you’ve scoped it, the fixes are usually straightforward: use wired connections where possible, reduce contention during peak times (large uploads, backups, video meetings), and implement QoS so voice traffic isn’t competing equally with everything else. If Wi-Fi is the culprit, focus on coverage and interference: stronger signal, fewer hops, modern access points, and avoiding congested channels. The key is to monitor packet loss and jitter during the exact time the issue occurs—because “VoIP sounds bad” often correlates with short spikes, not average bandwidth.

What to check when calls keep dropping

Dropped calls are often caused by edge-device behavior rather than “bad VoIP service.” The first high-impact check is SIP ALG on the router/firewall—disable it unless you have a very specific reason to keep it on, because it commonly breaks SIP by rewriting headers and confusing NAT behavior.

Next, look at NAT and firewall session timers. If calls drop at a consistent time interval (e.g., around 30 seconds, 60 seconds, or a few minutes), that’s a strong signal that a timer or keepalive/session refresh setting is expiring. Make sure the device isn’t closing UDP mappings too aggressively and that SIP/RTP is allowed through reliably (correct port handling, no “helpful” rewrites). Also confirm router firmware is current and stable—some call-drop issues trace back to buggy firmware or security features that silently interfere with real-time traffic. Finally, verify that session refresh behavior (re-INVITE/UPDATE/Session-Timers) matches expectations end-to-end; mismatches here can cause calls that connect fine to drop mid-call.

How to troubleshoot one-way audio or no audio

If calls connect but audio is missing (or only one party can hear), you’re almost always dealing with an RTP media path problem, not a SIP “call setup” problem. The common causes are RTP being blocked, NAT mismatches, double NAT, or the PBX/endpoint advertising the wrong public IP in SDP, which causes the far end to send audio to an unreachable private address. Another frequent culprit is firewall “pinholes” that open briefly and then close, making the first seconds work and then the audio disappear.

Fixes usually focus on making RTP predictable end-to-end: confirm the correct RTP port ranges are allowed through your firewall, eliminate double NAT where possible, and make sure NAT settings on the PBX/phone reflect the real public IP. If you’re on-prem behind NAT (or have complex routing), an SBC can dramatically reduce one-way audio by anchoring media, handling NAT traversal cleanly, and hiding internal topology. Once you adjust settings, validate with a repeatable test call and confirm RTP flows both directions.

What to do when phones do not ring or unregister

When phones don’t ring or keep showing unregistered, start by separating registration problems from routing problems. If the device is unregistered, inbound calls can’t reach it; if it’s registered but still doesn’t ring, the issue may be PBX routing, DND, or call-forward rules. Registration failures commonly come from DNS issues, credential/auth problems, intermittent connectivity, DHCP conflicts or IP changes, and network instability that causes registration to flap. On softphones/mobile apps, background restrictions (battery optimization, sleep modes, OS permissions) can prevent the app from staying reachable even when it looks “installed correctly.”

The most reliable fix path is to confirm the REGISTER flow is working and stable: the phone registers, stays registered, and refreshes before NAT bindings expire. If devices drop off after some time, you may need to adjust registration intervals or enable keepalives so the router/firewall doesn’t silently time out the session. Also verify DNS resolution is consistent, credentials are correct, and the network isn’t briefly dropping packets during peak usage. For mobile, explicitly whitelist the VoIP app from battery optimization and ensure notifications/background activity are allowed.

Fixing IVR and DTMF problems

DTMF issues are classic “everything sounds fine but nothing works” problems: callers press keys and the IVR doesn’t respond, or digits are missed/duplicated. The most common reason is a DTMF method mismatch—one side sends RFC2833/RFC4733 events while the other expects SIP INFO, or inband tones are being used but the codec/transcoding path distorts them. In other words, the call audio can be perfect while DTMF fails because the signaling method isn’t aligned.

The fix is to standardize DTMF delivery end-to-end across phone/softphone, PBX, SBC, and provider. In most VoIP environments, RFC2833/RFC4733 is the most reliable default. If you must use inband DTMF, keep the media path simple (ideally G.711) and avoid unnecessary transcoding. After changes, test the workflows that matter—IVR navigation, voicemail PIN entry, conference controls—because DTMF problems often only reveal themselves in those specific paths.

Best VoIP Troubleshooting Tools

The fastest VoIP troubleshooting happens when you can see both sides of the story: what SIP signaling is doing (setup/teardown/errors) and what RTP media is doing (is audio actually flowing both ways, and is it clean). The tools below help you capture evidence, isolate the layer causing the issue, and prove whether the problem is endpoint, network, PBX, or provider.

Packet and signaling tools

Wireshark (SIP/RTP inspection)

Best for understanding call setup (INVITE → 200 OK → ACK), spotting obvious SIP errors (401/403/404/408/486/503), and confirming whether RTP is flowing both directions (the #1 check for one-way/no audio). Wireshark is also ideal for comparing a “good call” capture vs a “bad call” capture so you can see what changed.tcpdump / tshark (server-side captures)

When you can’t run Wireshark on the PBX (or you’re troubleshooting a cloud VM), capture packets on the server with tcpdump and analyze later in Wireshark. tshark gives you Wireshark-style decoding from the command line, which is handy for quick checks or automations.

VoIP monitoring and QoE tools

These tools help you stop troubleshooting blind by measuring voice quality signals continuously and correlating them with network events.

Examples to consider (pick based on your environment and budget):

VoIPmonitor-style platforms (SIP/RTP capture + MOS/jitter/loss analysis)

SolarWinds VNQM (enterprise VoIP monitoring)

PRTG (broad monitoring with voice/network sensors)

Site24x7 (cloud monitoring for performance/availability)

Capsa / VoIP Spear (packet and VoIP-focused troubleshooting tools)

The key features to look for are: jitter/packet loss visibility, MOS-style scoring, call detail drilldowns, and alerts that trigger when quality degrades (not after users complain).

Network tools for voice troubleshooting

Ping / mtr / traceroute

Useful for spotting latency spikes, unstable hops, or routing changes. mtr is especially helpful because it shows variability over time rather than one-off results.iperf3 (UDP mode)

Great for testing whether your network can handle real-time traffic under load. UDP tests can reveal packet loss and jitter patterns that don’t show up in simple “speed tests.”Continuous monitoring (PRTG / Site24x7 style)

VoIP issues are often intermittent and time-based. Continuous monitoring helps you prove that “calls sound bad at 10am every day” correlates with congestion, Wi-Fi contention, or WAN loss—so you can fix root cause instead of rebooting phones forever.

VoIP Troubleshooting with Wireshark

Wireshark can feel overwhelming because it shows everything, but VoIP troubleshooting gets much easier when you focus on just two questions: Did SIP set up the call correctly? And did RTP audio flow in both directions with acceptable quality?

Also, there are plenty of resources that will help you.

1) Capture the right traffic

Start with a capture taken as close as possible to the problem source (affected PC, phone VLAN mirror, PBX interface, or SBC). If you can, capture one normal call and one failing call back-to-back. That comparison is gold.

2) Filter down to SIP first

Use SIP filters to reduce noise:

sip(shows SIP signaling)sip.Method == "INVITE"(find call starts)sip.Method == "REGISTER"(registration problems)

What you want to see in a healthy call setup:

INVITE (call attempt)

100 Trying / 180 Ringing / 183 Session Progress (normal progress)

200 OK (call answered; usually contains SDP)

ACK (finalizes setup)

If the call doesn’t connect, look for clear failures:

401/407 (auth challenge—often fine once, bad if it loops)

403 (forbidden/blocked)

404 (wrong destination)

408 (timeout)

486 (busy)

5xx/6xx (server/global failures)

3) Check SDP inside SIP

Open the SIP INVITE and 200 OK and look at the SDP:

IP address and port being offered/answered for RTP

Codecs being offered/selected

Whether the negotiated media IP looks wrong (e.g., a private IP where the far end can’t reach it)

This is where many one-way/no-audio issues reveal themselves: SIP succeeds, but SDP points RTP at the wrong place.

4) Confirm RTP is flowing both ways

Now filter RTP:

rtp(shows RTP packets if Wireshark detects them)If RTP isn’t auto-detected, use Telephony → RTP → RTP Streams to list streams.

What you want:

Two RTP streams (one in each direction) once the call is connected

Reasonable packet timing (not huge gaps)

No obvious stream that starts and then stops immediately

If you only see RTP one way, you’ve essentially proven a media path/NAT/firewall issue.

5) Look for jitter/loss clues

Wireshark can estimate RTP stats:

Telephony → RTP → Stream Analysis

Check for high jitter, sequence gaps (loss), and abnormal delay spikes. You’re not trying to “perfect the MOS”—you’re trying to confirm whether the network is misbehaving.

FAQ About Wireshark

- Is using Wireshark legal?

Yes—when you capture traffic you’re authorized to monitor (your own network, your own devices, your own systems) and you follow your company policies and local laws. It becomes a legal/compliance problem if you capture data on networks or devices you don’t have permission to inspect, or if you store sensitive content without proper controls.

- What is the difference between Wireshark and Nmap?

Wireshark is a packet analyzer (it inspects actual network traffic like SIP and RTP). Nmap is a network scanner (it discovers hosts, open ports, and services). In VoIP troubleshooting, Wireshark helps you see what happened during a call; Nmap helps you verify what’s reachable and what ports/services are exposed.

- Does Wireshark require coding?

No. Most VoIP troubleshooting in Wireshark is done with filters, call-flow inspection, and RTP stream analysis. Coding can help for automation (tshark scripts), but it’s optional.

Best training resources for learning VoIP troubleshooting

Getting good at VoIP troubleshooting is mostly about building pattern recognition. These resources help you learn both the theory and the real-world troubleshooting instincts.

Vendor academies and docs (PBX-specific)

Start with the official docs/training for the PBX or platform you actually manage. Vendor guides usually include the exact settings that cause the most pain in production (NAT, RTP port ranges, SIP trunk templates, registration timers, DTMF modes) and how to pull traces/logs from that platform. This is the fastest way to reduce “platform-specific” mistakes that generic VoIP guides won’t catch.

Wireshark VoIP analysis guides and labs

Wireshark becomes useful when you practice with real call examples. Look for beginner-friendly VoIP labs that teach you how to follow a call from INVITE → 200 OK → ACK, then verify RTP streams both ways and spot common errors (timeouts, retransmissions, wrong SDP IPs, missing RTP).

Community threads and real incident writeups

This is where you level up quickly, because you see real symptoms, real configs, and real fixes—plus what didn’t work. A great place to learn is r/VOIP on Reddit, where admins share SIP traces, Wireshark captures, routing oddities, and troubleshooting approaches. Reading a handful of threads teaches you how experienced people think through problems.

Telxi blog

For ongoing skill-building, it helps to keep a “field guide” you can reference when issues pop up. The Telxi blog is a good internal learning resource on common VoIP problem patterns and their causes—useful for training new team members and standardising your troubleshooting approach across the team.

When to escalate to your VoIP provider?

Escalate once you’ve done enough isolation to say what’s broken, where it’s likely broken, and how to reproduce it. The goal is to give them the evidence they need to stop guessing and start tracing the exact call behavior on their side.

What to include in your escalation:

Exact timestamps of 2–3 failed calls (include timezone)

Caller/callee identifiers (numbers or extensions) and call direction (inbound/outbound)

Symptom in one sentence (e.g., “one-way audio after answer” or “drops at ~30 seconds”)

Scope and pattern (one user vs site-wide, only Wi-Fi vs also wired, only at peak times, only inbound, etc.)

Network context (public IP of the site, firewall/router model if relevant, any recent changes)

SIP trace / ladder diagram from your PBX if available

PCAP (Wireshark/tcpdump) if you captured it—especially for one-way audio/drops

What you already tested (e.g., disabled SIP ALG, tested wired vs Wi-Fi, tested hotspot) and the results

Why Companies Choose Telxi as Their VoIP Provider

Telxi is commonly chosen by companies that want a modern VoIP stack they can deploy quickly, scale across regions, and troubleshoot with better visibility.

Global voice + numbers under one roof

Telxi positions itself around SIP trunking and DID coverage for international and multi-site businesses, which helps teams standardize voice across locations instead of stitching together multiple local providers.Fast provisioning (portal and API)

For IT teams and MSPs, speed matters. Telxi highlights provisioning via a self-service portal and API, which is useful when you need to spin up trunks/numbers quickly for new sites, campaigns, or deployments.Security and fraud prevention built into the service

VoIP fraud and unwanted traffic are real operational risks. Telxi emphasizes security and fraud prevention features as part of its offering, which is a common selection criteria for business calling.Operational visibility and analytics

Teams troubleshooting call issues benefit from visibility into call activity and performance. Telxi highlights analytics-style capabilities as part of its platform positioning.24/7 technical support

When voice is business-critical (sales/support), response time matters. Telxi states 24/7 technical support availability, which is a common checkbox for companies that can’t afford long outages.

FAQ About VoIP Troubleshooting

- How to troubleshoot a VoIP system?

Start by classifying the symptom (choppy audio, drops, one-way audio, no ringing, deregistration, DTMF issues), then scope whether it affects one user or everyone. Next, compare paths (Wi-Fi vs Ethernet vs hotspot) and separate SIP signaling (call setup) from RTP media (audio). Only then change one thing at a time, retest, and document—so you know what actually fixed

- What is a common issue with VoIP?

The most common recurring issues are call quality problems caused by network conditions (packet loss, jitter, latency), plus edge/network misconfigurations (NAT/firewall, SIP ALG) that create drops or one-way audio.

- How to check if VoIP is working?

Do a quick “three-layer” check:

Registration: are phones/apps registered and reachable?

Call test: can you make an outbound call and receive an inbound call?

Media test: is audio two-way, stable (no choppiness), and does DTMF work in an IVR?

If any one layer fails, you’ve already narrowed where to look.

- Why is my VoIP phone not receiving calls?

Most often it’s one of these: the device isn’t registered, inbound routing/DID mapping is wrong, the phone is offline/asleep (common on mobile apps), or a network/NAT/firewall issue is blocking signaling or reachability. Confirm registration first, then test inbound routing, then check firewall/SIP ALG/NAT behavior.